Exploring Tesla’s Full Self-Driving Technology: Building a 3D World from Camera Images

Delving into the intricacies of Tesla’s Full Self-Driving (FSD) technology, we uncover how it transforms ordinary 2D images into a dynamic 3D world. Recent Tesla patent applications shed light on Vision-Based Occupancy Determination and Vision-Based Surface Determination, revealing the innovative approach FSD takes to perceive its environment solely through vision.

To fully grasp this technical piece, we recommend familiarizing yourself with our previous series:

- How FSD Works Part 1

- How FSD Works Part 2

- How FSD Works Part 3 (this article)

- Tesla’s Universal AI Translator

- How Tesla Optimizes FSD

- How Tesla Will Label Data with AI

Constructing a 3D Environment from 2D Images

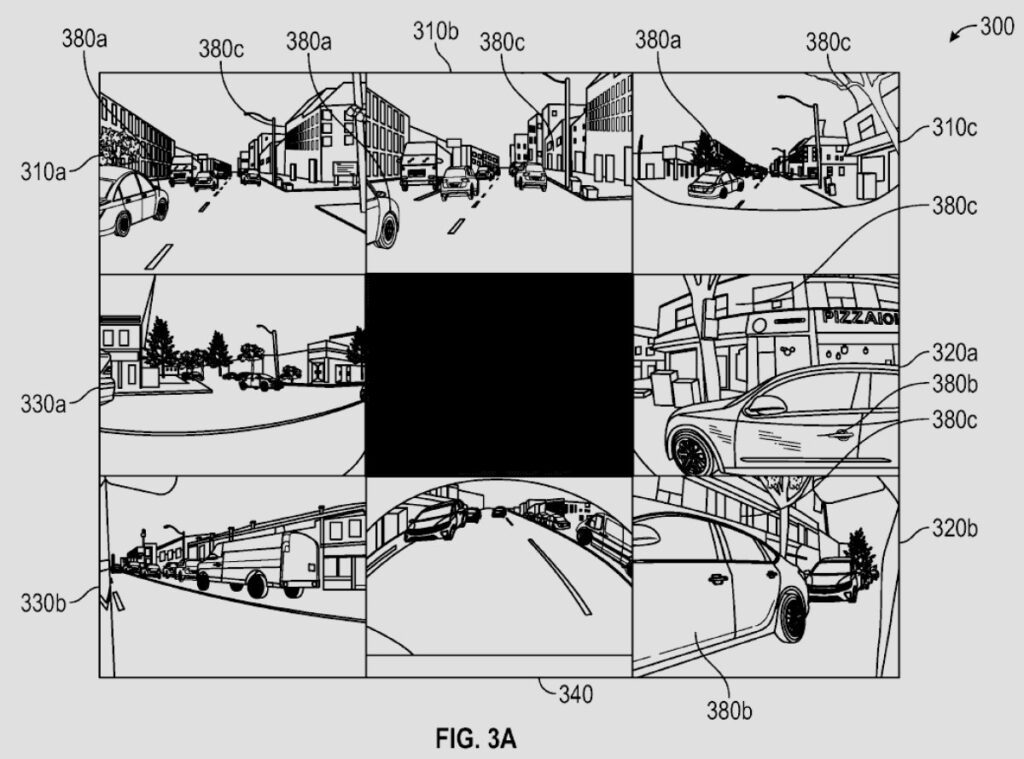

Unlike LiDAR-based systems that directly measure distance and depth, Tesla’s FSD relies on inferring depth, shape, motion, and context from pixel patterns captured by its cameras. By amalgamating these elements across multiple camera views, FSD constructs the 3D world it operates in.

Part 1: Vision-Based Occupancy Determination

Tesla’s “Artificial Intelligence Modeling Techniques for Vision-Based Occupancy Determination” patent elucidates how FSD identifies objects in its surroundings and their spatial occupancy. The system has evolved from merely outlining objects with bounding boxes to creating a volumetric understanding.

FSD Pipeline: Pixels to Objects

- Image Input: Raw image data from vehicle cameras.

- Image Featurization: Processing images to extract relevant visual details.

- Spatial Transformation: Using a transformer model to project 2D features into a unified 3D representation of the environment.

- Temporal Alignment: Fusing 3D representations over time to capture spatial-temporal features.

- Deconvolution: Transforming fused data into predictions for each voxel in the 3D grid.

With this data, FSD predicts occupancy and velocity vectors for voxels, aiding in understanding the environment.

The Output

Occupancy map compilation enables FSD’s planning system to make informed driving decisions based on the 3D model it builds.

Part 2: Vision-Based Surface Determination

Tesla’s “Artificial Intelligence Modeling Techniques for Vision-Based Surface Determination” patent focuses on understanding surface attributes for safe navigation.

Predicting Surface Attributes from Vision

An AI model analyzes camera imagery to determine surface elevation, navigability, material, and key features.

Building the 3D Surface Mesh

Integration of surface data creates a 3D mesh representing the environment around the vehicle.

Training for Surface Recognition

Data correlation from sensors and camera images helps train the system on distance and surfaces.

Unified World Model

Combining occupancy and surface data results in a comprehensive 3D world model that fuels FSD’s decision-making process.

Advancing Autonomous Systems

As Tesla refines these systems, FSD’s world model will continue to evolve, enhancing the capabilities of autonomous driving technology.